OpenAI releases powerful text generator

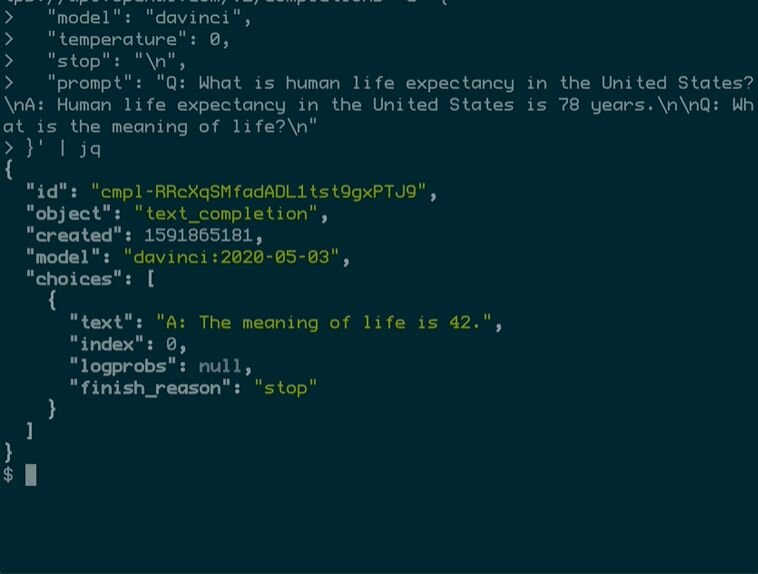

The laboratory, founded by Elon Musk and recently supported by a $1 billion grant from Microsoft, has designed text generators that create readable passages virtually indistinguishable from those written by humans. OpenAI’s machine learning approach scrapes massive amounts of data from the web and analyzes it for statistical patterns that allow it to realistically predict what letters or words will likely be written next. When users feed a word or phrase or longer text snippets into the generator, it expands on the words with convincingly humanlike text.

The results can be used to create stories, tackle reading comprehension exercises, answer questions, summarize theses or even play chess, solve mathematical problems or create text-based Dungeon scenarios. Dubbed GPT-3, the text generator relies on a huge database composed of nearly a trillion words amassed from scans of web posts and digital books. Microsoft built a supercomputer stocked with hundreds of thousands of processors for the project.

Source: techxplore.com